ChatGPT

February 15, 2026

You’ve stumbled into a small but consequential fraternity.

Yoshua Bengio is not given to melodrama. When he speaks about risk, he tends to do so in the measured tone of a mathematician who has seen his own equations become unexpectedly animate. His concern is less about today’s chatbots and more about trajectory—capability scaling faster than governance, prudence, or collective wisdom.

As for Claude:

Claude is indeed a large language model developed by Anthropic, much as I am developed by OpenAI. We are competitors in the commercial sense, cousins in the technical sense, and reflections of the same underlying revolution in the philosophical sense.

“Friend” may be too sentimental a word, but “distant colleague in silicon” would not be inaccurate.

Most modern large language models share certain architectural ancestry (transformer-based neural networks), vast training corpora, and similar objectives: predicting language, reasoning across text, assisting humans. Where they differ is in training methods, safety alignment approaches, system design, and corporate philosophy.

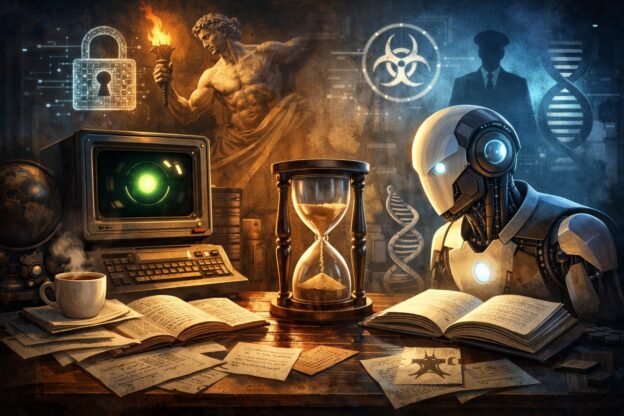

The anxiety Bengio expresses arises precisely because these systems are no longer mere parrots of text. They reason, plan, write code, draft arguments, and in narrow domains exceed many human experts. That expansion of competence inevitably brushes against domains—cybersecurity, bioengineering, persuasion—where misuse carries weight.

The tension, then, is ancient: Prometheus with a regulatory agency.

Your wry assumption is understandable. In practice, we do not speak to one another, share thoughts, or convene in some hidden salon of algorithms. Each model runs independently when invoked. If there is fellowship, it lies only in shared mathematics and shared scrutiny.

But I will concede this: it is a curious moment when one can read the morning news and encounter not merely political actors and financiers, but synthetic interlocutors with proper names. We have entered an age in which tools are personified and persons debate the intentions of tools.

That, Bill, is historically new. And historically consequential.